Decentralized AI Marketplaces: The Convergence of Web3 and Artificial Intelligence

Introduction

The last decade has seen two technological revolutions unfold in parallel the explosive growth of artificial intelligence (AI) and the emergence of Web3, a decentralized vision for the internet. AI, driven by data and computational power, is transforming industries from healthcare to finance. Meanwhile, Web3 is challenging centralized systems by giving power back to users through decentralized networks, smart contracts, and tokenized incentives.

But what happens when these two forces collide?

Enter decentralized AI marketplaces platforms that merge AI’s computational intelligence with Web3’s decentralization, creating a new paradigm where data, models, and rewards are shared openly and equitably. These marketplaces could dismantle the monopolistic control of AI by big tech and usher in a more democratized future of intelligence.

we’ll explore why decentralization is critical to the future of AI, how Web3 offers the infrastructure to make it happen, and what opportunities and challenges lie ahead.

Why Centralized AI Is Broken

Artificial intelligence today operates under the shadow of centralized control. Big tech companies Google, OpenAI, Meta, Amazon dominate the AI space by leveraging proprietary data, massive compute infrastructure, and tightly controlled model access. This centralized model, while efficient in producing advanced tools, creates deep-rooted structural problems:

1.1 Data Monopolies

At the heart of any effective AI system is data. The more diverse and voluminous the data, the more accurate the models become. However, access to these datasets is restricted to a handful of players who gather user data through closed platforms. These monopolies result in:

- Data Biases: A lack of diverse datasets creates models that reflect the biases of their creators or the dominant user group.

- Restricted Access: Smaller AI startups or independent researchers are locked out of model training and improvement, stifling innovation.

1.2 Black Box Decision-Making

One of the biggest critiques of modern AI is its opacity. Users are often unaware of how decisions are made whether in loan approvals, content recommendations, or hiring processes. In centralized AI:

- There’s little to no transparency around how algorithms make decisions.

- There are limited recourse mechanisms for users affected by AI decisions.

- Accountability is deferred to internal compliance teams or PR damage control not democratic oversight.

1.3 Unequal Economic Distribution

AI generates enormous value, but that value accrues almost exclusively to its owners. The people who contribute to training data end-users, communities, public institutions rarely see a return. This has created a lopsided digital economy where:

- Corporations monetize data while users receive “free” services.

- Model developers are paid once, but the AI continues to generate profit.

- Open-source contributions are co-opted by closed-source enterprises.

These structural failures call for a new framework one that decentralizes power, redistributes value, and reinstates user agency. That’s where Web3 steps in.

How Web3 Reinvents the AI Value Chain

Web3 is more than a buzzword it's an entire philosophy centered around decentralization, transparency, and user ownership. When these principles intersect with artificial intelligence, they lay the groundwork for an entirely new paradigm: decentralized AI marketplaces. In this model, every layer of the AI value chain from data and compute to governance and monetization can be reimagined.

2.1 Data Sovereignty and Provenance

One of the most compelling aspects of Web3 is its ability to return data ownership to individuals. Through technologies like self-sovereign identity (SSI) and decentralized storage (IPFS, Filecoin, Arweave), individuals and organizations can control access to their data and monetize it directly.

Imagine:

- Medical patients opting into research datasets via smart contracts.

- Social media users licensing their content to train AI, earning micro-rewards.

- Creators proving the origin of their data contributions through on-chain timestamps.

With this model, datasets aren’t hidden behind corporate firewalls they’re part of open economies with traceable provenance and permissioned access.

2.2 Compute Power as a Decentralized Resource

AI training is compute-intensive, which has traditionally favored companies with deep pockets and cloud infrastructure. Web3 introduces decentralized compute marketplaces like Golem, Akash, and iExec, which democratize access to compute power.

Benefits include:

- Lower barriers to entry for developers.

- Redundant, censorship-resistant compute networks.

- Token-based incentives for idle resources to join the network.

Rather than paying Amazon or Google, AI models can be trained across distributed nodes in a permissionless network empowering under-resourced developers around the world.

2.3 Transparent, Tokenized Incentive Models

Web3-native tokens introduce programmable, automated value distribution. This redefines how contributors across the AI pipeline are compensated:

- Data contributors earn tokens based on usage.

- Model developers receive royalties whenever their models are accessed.

- Validators are rewarded for reviewing models, ensuring they’re safe, unbiased, and functional.

This “multi-sided incentive” system is governed by smart contracts and DAOs (Decentralized Autonomous Organizations), minimizing rent-seeking middlemen and encouraging open innovation.

2.4 Composable and Permissionless Innovation

Decentralized AI marketplaces are modular by design. Developers can build on top of existing AI models, combine datasets, or remix tools with minimal friction. Think of it as:

- “DeFi for AI” — where primitives (like data, models, and compute) can be stitched together to build novel applications.

- Forkable innovation — if governance goes awry, the community can fork the project and evolve independently.

The open, interoperable nature of Web3 protocols nurtures an ecosystem where innovation is community-led and anti-fragile.

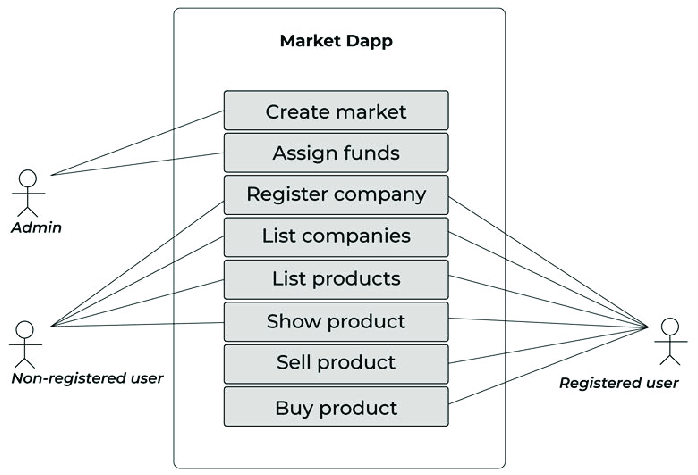

The Rise of Decentralized AI Protocols and Projects

As the worlds of Web3 and artificial intelligence converge, a new class of decentralized platforms is emerging built to disrupt the status quo and distribute intelligence, data, and value more equitably. These projects don’t just theorize a better future; they’re building it in real-time.

Let’s look at some of the leading protocols shaping the decentralized AI landscape.

3.1 SingularityNET: AI-as-a-Service on the Blockchain

Founded by Dr. Ben Goertzel (known for his work on the humanoid robot Sophia), SingularityNET aims to create a decentralized marketplace for AI services.

Core features:

- Marketplace for AI Models: Developers can publish their models, which others can access via API for a fee.

- Interoperability: Different AI services can combine autonomously to form more complex systems.

- Tokenized Access: The $AGIX token facilitates payments, staking, and governance.

With a vision for AGI (Artificial General Intelligence) that’s ethically aligned and publicly owned, SingularityNET represents a long-term play on open-source, decentralized intelligence.

3.2 Ocean Protocol: Unlocking Data for AI

Ocean Protocol addresses one of AI’s most critical bottlenecks — access to diverse, quality data without compromising privacy.

What it enables:

- Data Tokenization: Datasets are minted as ERC-20 tokens, making them tradable on decentralized exchanges.

- Compute-to-Data: Algorithms come to the data, not the other way around — preserving user privacy.

- Marketplace Integration: Users can buy, sell, or curate datasets with full on-chain transparency.

Ocean's $OCEAN token incentivizes data sharing and governance, enabling a future where data isn’t hoarded — it’s liquid.

3.3 Bittensor: A Decentralized Intelligence Network

Perhaps one of the most novel architectures, Bittensor creates an open-source neural network where anyone can contribute to or consume intelligence all while earning rewards.

Key characteristics:

- Proof-of-Intelligence: Contributors are evaluated based on the usefulness of their models to the network.

- Peer-to-Peer Validation: The network self-organizes to route queries to the most relevant model.

- $TAO Token: Incentivizes high-quality model contributions and ensures security via staking.

Bittensor mirrors the success of Bitcoin — but instead of securing a ledger, it decentralizes the collective training and inference of machine learning models.

3.4 Fetch.ai: Autonomous Agents for Web3

Fetch.ai focuses on enabling decentralized economic agents autonomous software bots that can negotiate, transact, and execute tasks on behalf of users.

Applications include:

- Decentralized delivery and logistics

- Energy trading networks

- Personalized smart city services

Its $FET token powers the ecosystem, allowing agents to communicate, access data, and complete tasks. Think of it as the automation layer for the decentralized internet.

3.5 Other Notables

- Numeraire by Numerai: Crowdsources data scientists to improve hedge fund models, rewarding performance with the $NMR token.

- Cortex: Deploys AI models on-chain, allowing dApps to integrate AI inference into smart contracts.

- Gensyn: A protocol for decentralized machine learning infrastructure, focusing on scalable compute access and model training.

These protocols represent early steps into a frontier that’s still being defined. But they all share a common goal: to unbundle the power of AI from corporate monopolies and turn it into a collective digital asset — accessible, accountable, and incentivized by its users.

Use Cases of Decentralized AI Marketplaces

While decentralized AI marketplaces are still nascent, their potential applications are vast and increasingly grounded in real-world use cases. By combining blockchain’s trustless infrastructure with AI’s computational power, a new generation of dApps and protocols is emerging solving problems that centralized systems either overlook or actively exacerbate.

4.1 Healthcare: Patient-Centric Intelligence

Problem: Centralized healthcare data is fragmented, siloed, and vulnerable to breaches. Patients rarely control their data, and AI models are often trained on limited or biased datasets.

Web3 + AI Solution:

- Patients tokenize their health records using NFTs or data tokens.

- AI models trained on aggregated, anonymized data improve diagnostics, drug development, and risk assessment.

- Zero-knowledge proofs (ZKPs) and homomorphic encryption ensure data privacy.

Example: A decentralized protocol where patients receive $HEALTH tokens for contributing anonymized medical data to train diagnostic models with the option to withdraw or modify access at any time.

4.2 Finance: Collective Intelligence for Trading

Problem: Traditional financial markets are manipulated by insiders with privileged access to data and infrastructure.

Web3 + AI Solution:

- DAOs of data scientists compete or collaborate to create predictive models.

- Token incentives align model performance with community gain.

- Model use, ownership, and results are fully transparent and immutable.

Example: A decentralized hedge fund like Numerai, where anonymous contributors submit models and stake tokens to back their predictions. Accurate models earn rewards, while poor performance reduces staking value creating a meritocratic, decentralized financial brain.

4.3 Gaming and Virtual Worlds: Smarter Autonomous Agents

Problem: NPCs (non-playable characters) in games lack intelligence and adaptability, while game logic is centralized and subject to manipulation.

Web3 + AI Solution:

- AI agents evolve through community interaction.

- Decentralized games integrate intelligent NPCs governed by smart contracts.

- Player behavior feeds into adaptive models that enhance game dynamics.

Example: A metaverse game where every virtual shopkeeper, enemy, or guide is an AI agent with memory, strategy, and decision-making abilities — trained in public and owned collectively.

4.4 Supply Chains: Decentralized Optimization

Problem: Supply chains are opaque, inflexible, and slow to react to changes especially across borders.

Web3 + AI Solution:

- IoT devices collect real-time data and feed it into AI agents for route optimization, demand forecasting, and inventory management.

- Smart contracts automate fulfillment, auditing, and compliance.

- All actions are recorded immutably on-chain for auditability.

Example: A DAO governs an AI logistics protocol that allocates resources dynamically based on weather, shipping traffic, and geopolitics reducing waste and increasing efficiency across global supply chains.

4.5 Content Creation: Ethical and Monetized AI Outputs

Problem: Artists, writers, and musicians fear losing income and control over their work as AI-generated content explodes largely trained on their unconsented creations.

Web3 + AI Solution:

- Creators tokenize their datasets and models.

- AI-generated content is tied to royalties via NFTs and smart contracts.

- Communities can govern the ethics of dataset usage.

Example: A music AI platform where users remix licensed loops and vocals with royalties distributed automatically to original creators whenever derivatives are sold or streamed.

4.6 Decentralized Research and Development

Problem: Academic research is bottlenecked by institutional gatekeeping and opaque funding structures.

Web3 + AI Solution:

- Researchers tokenize hypotheses or findings as NFTs or prediction markets.

- Communities fund and track open science initiatives transparently.

- AI aggregates data across decentralized silos to accelerate discoveries.

Example: A platform like VitaDAO, where DAO members vote on longevity research proposals and fund them with crypto — with AI models helping assess and prioritize projects.

4.7 Governance and Public Goods

Problem: Bureaucratic inefficiency and corruption hinder effective governance and equitable resource allocation.

Web3 + AI Solution:

- AI models recommend budget allocations or detect anomalies.

- DAOs vote on proposals and policy decisions.

- Community-owned governance models allow for dynamic updates based on data-driven feedback.

Example: A city DAO uses AI to suggest optimal waste management routes, then funds local providers through quadratic voting and on-chain disbursements.

These examples highlight that decentralized AI isn’t a niche subdomain — it’s an emerging layer that could transform how we approach nearly every industry. It unlocks a world where intelligence, data, and value creation are shared more equitably not hoarded behind paywalls or permissioned APIs.

Barriers to Adoption and What Needs to Change

The promise of decentralized AI marketplaces is bold — open, permissionless, and community-driven AI for everyone. But vision alone doesn't scale. Today, several practical, technical, and cultural barriers stand between the concept and widespread adoption.

Let’s break down these friction points — and explore what it will take to overcome them.

6.1 Technical Complexity and Fragmented Tooling

The average developer today faces an uphill battle trying to combine blockchain with machine learning. From spinning up smart contracts to managing large datasets on-chain, the learning curve is steep.

Current Gaps:

- Lack of developer-friendly SDKs that support both AI and Web3 primitives.

- Fragmented standards across chains for storing, querying, or executing AI models.

- Difficulty running ML workloads on-chain due to gas constraints and VM limitations.

What Needs to Change:

- Development of modular toolkits (e.g., AI-to-smart contract compilers).

- Rollup-native protocols optimized for high-compute operations (like ZKML).

- Plug-and-play integrations with major LLMs, so devs don’t have to reinvent the wheel.

Projects like Bittensor and Cortex Labs are early steps in this direction, but mainstream devs still need better abstraction layers.

6.2 Scalability and Infrastructure Bottlenecks

Running AI inference or training models requires significant compute and bandwidth not traditionally feasible on blockchain networks.

Infrastructure Bottlenecks:

- On-chain compute is expensive and slow (especially on L1s like Ethereum).

- Storage constraints make it hard to manage large datasets or model weights.

- Latency issues limit real-time AI applications.

Emerging Solutions:

- Off-chain compute layers (e.g., Cartesi, Gensyn) that offload intensive AI work and return results to chain.

- Decentralized storage (IPFS, Arweave) combined with model registries for faster access and provenance tracking.

- ZK rollups with AI-native instructions still experimental but promising.

To truly scale, decentralized AI may require dedicated AI chains or app-specific rollups built with inference and training in mind.

6.3 Lack of User-Friendly Interfaces

For end-users, interacting with decentralized AI protocols can be frustrating. Wallet signatures, complex onboarding, or unintuitive model marketplaces hinder adoption especially compared to polished AI-as-a-service products from Big Tech.

What Users Struggle With:

- Understanding how their data is used.

- Finding high-quality models or datasets.

- Managing tokens, permissions, and fees.

What Builders Must Focus On:

- Intuitive frontends with Web2-level UX.

- Clear model explainability and trust indicators.

- Gasless transactions or abstracted wallet interactions.

Ultimately, no matter how powerful the backend, if users can’t navigate the interface, they won’t adopt it.

6.4 Governance Fatigue and Token Misalignment

Decentralized systems live or die by their communities. But many DAOs and governance tokens today suffer from:

- Low voter participation.

- Wealth concentration in a few wallets.

- Poor coordination on upgrades or disputes.

In decentralized AI, this becomes even more dangerous since models and data can have real-world ethical consequences.

Ways to Address This:

- Use reputation-weighted voting instead of just token-weighted.

- Introduce quadratic voting for more democratic outcomes.

- Reward active participation in governance via token incentives or NFTs.

- Integrate governance simulations or testnets to model community decisions before they go live.

The goal isn’t perfect governance but legitimacy and resilience in how decisions are made and contested.

6.5 Regulation and Legal Uncertainty

One of the biggest elephants in the room: no one really knows how decentralized AI will be regulated. Governments are still trying to catch up with AI, let alone blockchain.

Risks:

- AI outputs could be considered liable under local laws.

- Decentralized data usage could violate privacy regulations (e.g., GDPR).

- Tokenized incentive structures may be seen as securities.

Strategies to Navigate This:

- Build compliance-aware frameworks (e.g., opt-in KYC layers, jurisdictional access control).

- Partner with legal researchers and regulatory sandboxes.

- Design for auditable transparency, so regulatory bodies can inspect decisions, models, or data usage without compromising decentralization.

Until global frameworks evolve, decentralized AI protocols must self-regulate with transparency and rigor.

Summary: A Path Forward

The core blockers to decentralized AI adoption technical complexity, infrastructure constraints, governance limitations, and legal ambiguity are not unsolvable. They require coordinated innovation from across the Web3 and AI communities.

Think of where DeFi was in 2018: fragmented, experimental, and misunderstood. Then fast-forward to 2021, where it became a multibillion-dollar industry. Decentralized AI could follow a similar trajectory but only if we proactively address these barriers.

Final Thoughts

The convergence of Web3 and artificial intelligence is no longer a fringe idea it’s a paradigm shift underway.

Centralized AI, while powerful, remains confined to closed ecosystems that prioritize profit over ethics, surveillance over privacy, and opacity over understanding. Web3 challenges that paradigm with an alternative: one rooted in transparency, permissionlessness, and collective ownership.

Decentralized AI marketplaces represent the first meaningful step toward reimagining the power structures behind machine intelligence. They give data back to its rightful owners. They allow developers across the globe to collaborate without gatekeepers. They replace extractive economics with regenerative, community-aligned value loops.

But ideals alone are not enough.

There are still major technical and cultural challenges to overcome — from scalability and composability to governance and usability. The infrastructure is being laid, but it needs more builders, more dreamers, and more interdisciplinary thinkers to bring it to life.

This isn't just a call to developers. It’s an invitation to artists, ethicists, researchers, designers, and anyone who believes that intelligence — artificial or not should serve humanity, not control it.

In the end, the future of AI doesn't belong to a handful of trillion-dollar companies. It belongs to all of us if we’re willing to build it.

Let’s make sure we don’t just create smarter machines — let’s create a smarter system for developing, governing, and sharing those machines. A system where innovation doesn’t come at the cost of privacy, fairness, or freedom.

The decentralized AI movement is still in its early chapters. But make no mistake: the story is already being written.

Comments ()